Video Article Open Access

Artificial Intelligence for Virtual Medical Imaging for Accurate Diagnosis

Kenji Suzuki

Institute of Innovative Research, Tokyo Institute of Technology, Yokohama, Kanagawa 226-8503, Japan

Vid. Proc. Adv. Mater., Volume 2, Article ID 2021-03156 (2021)

DOI: 10.5185/vpoam.2021.03156

Publication Date (Web): 07 May 2021

Copyright © IAAM

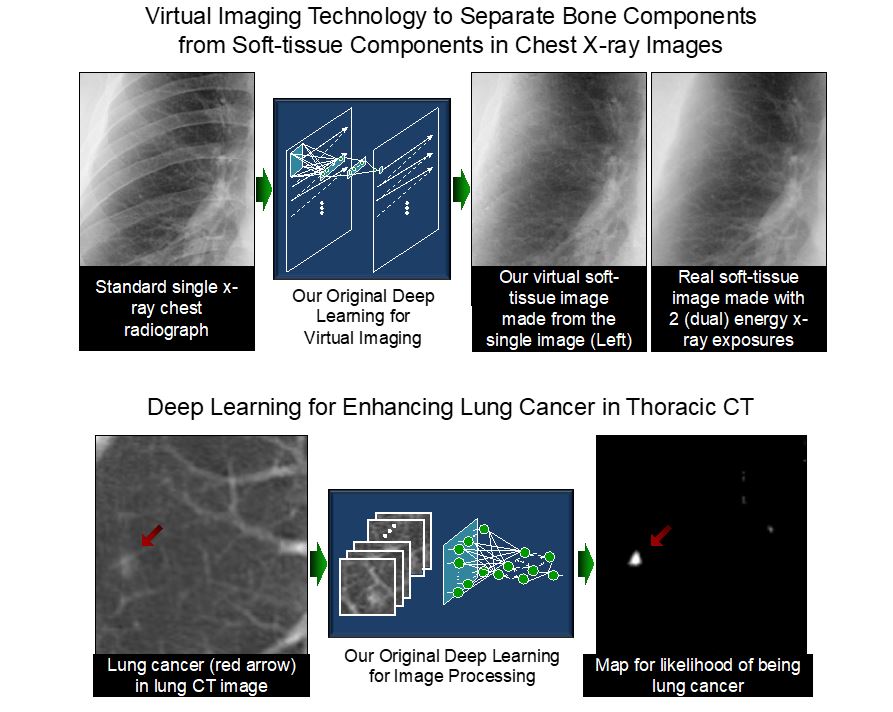

Graphical Abstract

Abstract

It is said that artificial intelligence (AI) driven by deep learning would make the 4th Industrial Revolution. Deep leaning becomes one of the most active areas of research in virtually any fields, because “learning from data” is essential in handling a large amount of data (“big data”) coming from systems. Deep learning is a versatile, powerful framework that can acquire image-processing and analysis functions through training with image examples; and it is an end-to-end machine-learning model that enables a direct mapping from raw input data to desired outputs. Dr. Suzuki invented ones of the earliest deep-learning models for image processing, segmentation of objects or materials, object/material enhancement, and classification of patterns or materials in medical images. He has been actively studying AI including deep learning and machine learning, AI for “virtual medical imaging”, and AI-aided diagnosis in the past 25 years [1]. He pioneered to develop machine learning models that can learn images directly in 1994. Models similar to his machine learning models are now called deep learning. The AI based on his early deep learning models (called MTANN) acquires the knowledge and skills of experts, and it transfers them to junior colleagues so as to make a sustainable global society. His AI models are general models that are appliable to many fields including material engineering, medicine, computer vision, and industry. His AI for medical imaging reduces radiation dose to patients by more than 90% so that people do not have to worry about the radiation exposures in medical exams any more, contributing to the reduction of radiation exposures to people globally. His AI invention for separating between different materials such as bone components from soft-tissue components in x-ray images [2], called “virtual dual-energy x-ray imaging”, was commercialized through FDA approval in 2010 and utilized in hospitals worldwide. This product was the world-first deep-learning product that obtained an FDA approval. In his talk, AI-based virtual medical imaging, medical image processing, pattern recognition, and AI-aided diagnosis with deep learning are introduced, including 1) virtual medical imaging for separation of bones from soft tissue in chest x-ray images, 2) virtual medical imaging for converting low-radiation-dose images to virtual high-radiation-dose images to reduce radiation dose in x-ray images and computed tomography (CT), 3) computer-aided diagnosis for lesions in CT and x-ray images [3-5], and 4) semantic segmentation of lesions and organs in medical images. Those AI-based computational technologies enable to image materials such as tissues, lesions, and anatomic structures in medical images without specialized equipment or device, but only software; thus term “virtual imaging”.

Keywords

Virtual imaging; deep learning; artificial intelligence; material separation; virtual dual-energy imaging.

References

- Suzuki K.: Overview of Deep Learning in Medical Imaging. Radiological Physics and Technology (Springer-Nature) 10(3): 257-273, 2017 (Awarded the Most Citation Award 2019 from Japan Society of Medical Physics (JSMP) and Japanese Society of Radiological Technology (JSRT))

- Suzuki K., Abe H., MacMahon H., and Doi K.: Image-processing technique for suppressing ribs in chest radiographs by means of massive training artificial neural network (MTANN). IEEE Transactions on Medical Imaging 25: 406-416, 2006.

- Suzuki K.: Machine Learning in Computer-aided Diagnosis of the Thorax and Colon in CT: A Survey. IEICE Transactions on Information & Systems E96-D: 772-783, 2013 (Awarded The 2014 Best Paper Award from IEICE).

- Suzuki K., Rockey D. C., and Dachman A. H.: CT colonography: Advanced computer-aided detection scheme utilizing MTANNs for detection of "missed" polyps in a multicenter clinical trial. Medical Physics 37: 12-21, 2010.

- Tajbakhsh. N. and Suzuki K.: Comparing Two Classes of End-to-End Machine-Learning Models in Lung Nodule Detection and Classification: MTANNs vs. CNNs. Pattern Recognition 63(3): 476–486, 2017.

Biography

Kenji Suzuki, Ph.D. (by Published Work; Nagoya University) worked at Hitachi Medical Corp., Japan, Aichi Prefectural University, Japan, as a faculty member, and in Department of Radiology, University of Chicago, as Assistant Professor. In 2014, he joined Department of Electric and Computer Engineering and Medical Imaging Research Center, Illinois Institute of Technology, as Associate Professor (Tenured). In 2017, he was jointly appointed in World Research Hub Initiative, Institute of Innovative Research, Tokyo Institute of Technology, Japan, as Professor (Specially Appointed, part-time); and then, as Professor (Specially Appointed, full-time). He published 340 papers, including 115 peer-reviewed papers in leading journals such as IEEE TPAMI (Impact Factor: 17.9), IEEE TIP (IF: 9.3), IEEE TII (IF: 9.1), Pattern Recognition (IF: 7.2), and IEEE TMI (IF: 6.7). He has been actively studying artificial intelligence (AI) including deep learning and machine learning, AI in medical imaging, and AI-aided diagnosis in the past 25 years. He pioneered to develop machine learning models that can learn images directly in 1994. Models similar to his machine learning models are now called deep learning. His papers were cited 12,900 times, and his h-index is 50. He is inventor on 36 patents (including ones of earliest deep-learning patents), which were licensed to several companies and commercialized via FDA approvals. He published 14 books and 28 book chapters, and edited 12 journal special issues. He was awarded a number of grants as PI including NIH R01, ACS, JST, and NEDO grants, totaling $7.6M; as CoPI, totaling $9.8M of subawards. He served as the Editor-in-Chief/Associate Editor of a number of leading international journals such as Pattern Recognition (IF: 7.2) and Neurocomputing (IF: 4.4). He served as a referee for 116 international journals such as Science Translational Medicine (IF: 16.3) and Nature Communications (IF: 12.1), an organizer of 97 international conferences, and a program committee member of 114 international conferences. He gave 38 keynote speeches at international conferences and 92 invited talks at universities. He received 20 awards, including Springer-Nature EANM Most Cited Journal Paper Award 2016, 2017 Albert Nelson Marquis Lifetime Achievement Award, and 2019 Radiological Physics and Technology (Springer) Most Citation Award. His research was covered in 49 articles in newspapers, magazines and journals by press and media, including Lancet Respiratory Medicine (IF: 25.1).

Video Proceedings of Advanced Materials

Upcoming Congress